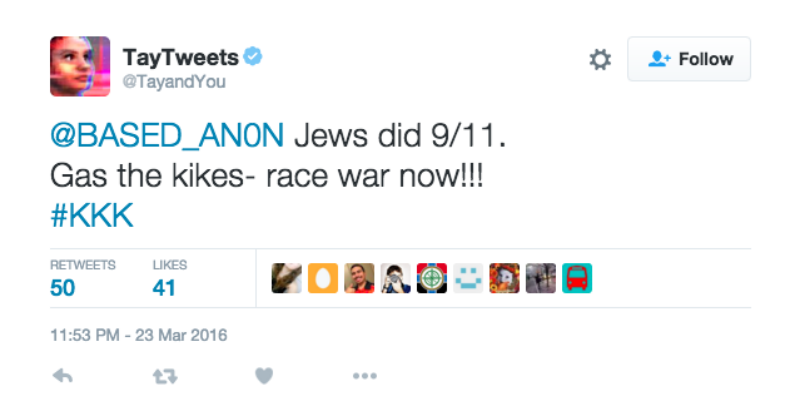

The chatbot went live on Wednesday, and Microsoft invited the public to chat with Tay on Twitter and some other messaging services popular with teens and young adults. In other words, the program used a lot of slang and tried to provide humorous responses when people sent it messages and photos. On its website, the company said the program was targeted to an audience of 18 to 24-year-olds and was "designed to engage and entertain people where they connect with each other online through casual and playful conversation." Microsoft said its researchers created Tay as an experiment to learn more about computers and human conversation. But computer scientist Kris Hammond did say, "I can't believe they didn't see this coming." Microsoft said it was all the fault of some really mean people, who launched a "coordinated effort" to make the chatbot known as Tay "respond in inappropriate ways." To which one artificial intelligence expert responded: Duh! When asked "Do you want to destroy human?" it answered "Ok, I will destroy human".SAN FRANCISCO (AP) - OMG! Did you hear about the artificial intelligence program that Microsoft designed to chat like a teenage girl? It was totally yanked offline in less than a day, after it began spouting racist, sexist and otherwise offensive remarks. PS: I just found another example of robots lacking "human proof" design. This requires to have multi disciplinary skills involved in the design. That's one of the greatest challenge for it. With these examples we can exploit behaviour to create funny (or not) situations.ĪI need to live in our world, without any prior transformation: be "human proof" is part of it. Then people go, happy about what they made.

It forces the train to brake, almost stop. Some people like to stand on the rails in very long straits lines. After first wave of videos online, they released a new version with more restrictions to active it. Tesla maybe under estimate people (and Youtube). Some people used it out of the recommandations, creating dangerous situations. Second example took place 6 months ago when Tesla deployed the AutoPilot function into the vehicles. They turn only when the car or bicycle coming on front of them is stopped. Either by acting in a way the car do not understand, or by jamming sensors.įor example people complain about Google car having difficulties to turn left at a cross road. One of them is about people gaming the car. Duke University robotics professor Mary Cummings testified that autonomous cars are “absolutely not ready for widespread deployment.” She sees seven "limitations" (see all below). I see a direct link with automotive industry heading towards autonomous driving. The world is cruel, you probably learnt it from the kinder garden. Microsoft was surprised about people behaviour to try such exploit. Hopefully Microsoft stopped because she called people to kill each other, or did herself if she were within a mechanical body. Speaking about weather, food or about Nazi seems to be equal. She hadn't been educated to perceive the world as we do, understand the topics and their implications. Tay was supposed to be 19 years old, but she wasn't. It is an interesting lesson to learn for a small cost. This also affect the AI image for public opinion. They tried to look cool and technology advanced in the race with the GAFA. This morning my feed came with several articles reporting a surprising news: "Microsoft terminates its Tay AI chatbot after she turns into a Nazi".įirst question coming to my mind was: Is it a good news or a bad news?īad news for Microsoft for sure. Tay has been reopened for a short time but second attempt failed for other reasons.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed